As artificial intelligence advances at a exponential pace, it is crucial to address the inherent risks associated with these powerful technologies. Ethical considerations surrounding bias, explainability, and impact on society must be thoroughly addressed to ensure that AI serves humanity.

Establishing robust guidelines for the deployment of AI is fundamental. This includes encouraging responsible research, securing data protection, and creating procedures for monitoring the impact of AI systems.

Furthermore, educating the public about AI, its limitations, and its implications is vital. Meaningful discussion between developers and the public can help to influence the implementation of AI in a way that is beneficial for all.

Securing the Foundations of Artificial Intelligence

As artificial intelligence advances, it's crucial to fortify its foundations. This involves addressing ethical concerns, ensuring transparency in algorithms, and establishing robust protection measures. Additionally, it's crucial to encourage collaboration between researchers and decision-makers to shape the progression of AI in a ethical manner.

- Strong data governance policies are critical to prevent bias and ensure the integrity of AI systems.

- Regular monitoring and assessment of AI output are essential for identifying potential issues.

Adversarial Attacks on AI: Defense Strategies and Best Practices

Adversarial attacks pose a significant challenge to the robustness of artificial intelligence (AI) systems. These attacks involve introducing subtle modifications into input data, causing AI models to produce incorrect or harmful outputs. To address this concern, robust defense strategies are crucial.

One effective approach is to utilize {adversarial training|, a technique that involves training AI models on both clean and adversarial data. This helps the model learn to likely attacks. Another strategy is input sanitization, which aims to remove or mitigate malicious elements from input data before it is fed into the AI model.

Furthermore, {ensemble methods|, which involve combining multiple AI models to make predictions, can provide increased robustness against adversarial attacks. Regular assessment of AI systems for vulnerabilities and implementing timely patches are also crucial for maintaining system security.

By adopting a multi-faceted approach that combines these defense strategies and best practices, developers can significantly enhance the resilience of their AI systems against adversarial attacks.

Challenges of Ethical AI Security

As artificial intelligence progresses at an unprecedented rate, the realm of AI security faces a unique set of ethical considerations. The very nature of AI, with its capacity for autonomous decision-making and learning, raises novel questions about responsibility, bias, and transparency. Researchers must aim to embed ethical principles into every stage of the AI lifecycle, from design and click here development to deployment and monitoring.

- Addressing algorithmic bias is crucial to ensure that AI systems interact with individuals fairly and impartially.

- Protecting user privacy in the context of AI-powered applications requires comprehensive data protection measures and explicit consent protocols.

- Guaranteeing accountability for the outcomes of AI systems is essential to foster trust and confidence in their application.

By implementing a proactive and responsible approach to AI security, we can utilize the transformative potential of AI while addressing its potential harms.

Mitigating Risk Through Human Factors in AI Security

A pervasive challenge within the realm of artificial intelligence (AI) security lies in the human factor. Despite advancements in AI technology, vulnerabilities often stem from unintentional actions or decisions made by individuals. Training and awareness programs become essential in addressing these risks. By educating individuals about potential vulnerabilities, organizations can foster a culture of security consciousness where.

- Consistent training sessions should focus on best practices for handling sensitive data, identifying phishing attempts, and following strong authentication protocols.

- Simulations can provide valuable hands-on experience, allowing individuals to practice their knowledge in realistic scenarios.

- Creating a atmosphere where employees feel confident reporting potential security issues is essential for effective response.

By prioritizing the human factor, organizations can significantly enhance their AI security posture and minimize the risk of successful attacks.

Protecting Privacy in an Age of Intelligent Automation

In today's rapidly evolving technological landscape, intelligent automation is revolutionizing industries and our daily lives. While these advancements offer tremendous benefits, they also pose unprecedented challenges to privacy protection. As algorithms become increasingly sophisticated, the potential for data breaches increases exponentially. It is essential that we develop robust safeguards to ensure individual privacy in this era of intelligent automation.

One key aspect is promoting visibility in how personal data is collected, used, and transmitted. Individuals should have a clear understanding of the purposes for which their data is being processed.

Moreover, implementing comprehensive security measures is critical to prevent unauthorized access and exploitation of sensitive information. This includes encrypting data both in transit and at rest, as well as conducting frequent audits and vulnerability assessments.

Furthermore, promoting a culture of privacy understanding is crucial. Individuals should be informed about their privacy rights and duties.

Danny Tamberelli Then & Now!

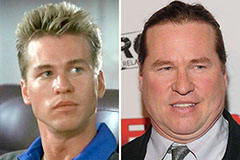

Danny Tamberelli Then & Now! Val Kilmer Then & Now!

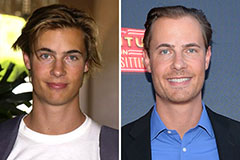

Val Kilmer Then & Now! Erik von Detten Then & Now!

Erik von Detten Then & Now! Kelly Le Brock Then & Now!

Kelly Le Brock Then & Now! Teri Hatcher Then & Now!

Teri Hatcher Then & Now!